Calico IPIP 模式

Jan 3, 2022 22:00 · 2311 words · 5 minute read

Flannel 网络插件会在宿主机上创建 CNI 网桥,而 Calico 则是无网桥 CNI 实现的代表。

$ ip addr show cni0

Device "cni0" does not exist.

Calico 网络插件提供两种 Overlay 方案:IPIP 与 VXLAN,本文只介绍 IPIP 模式。

IPIP

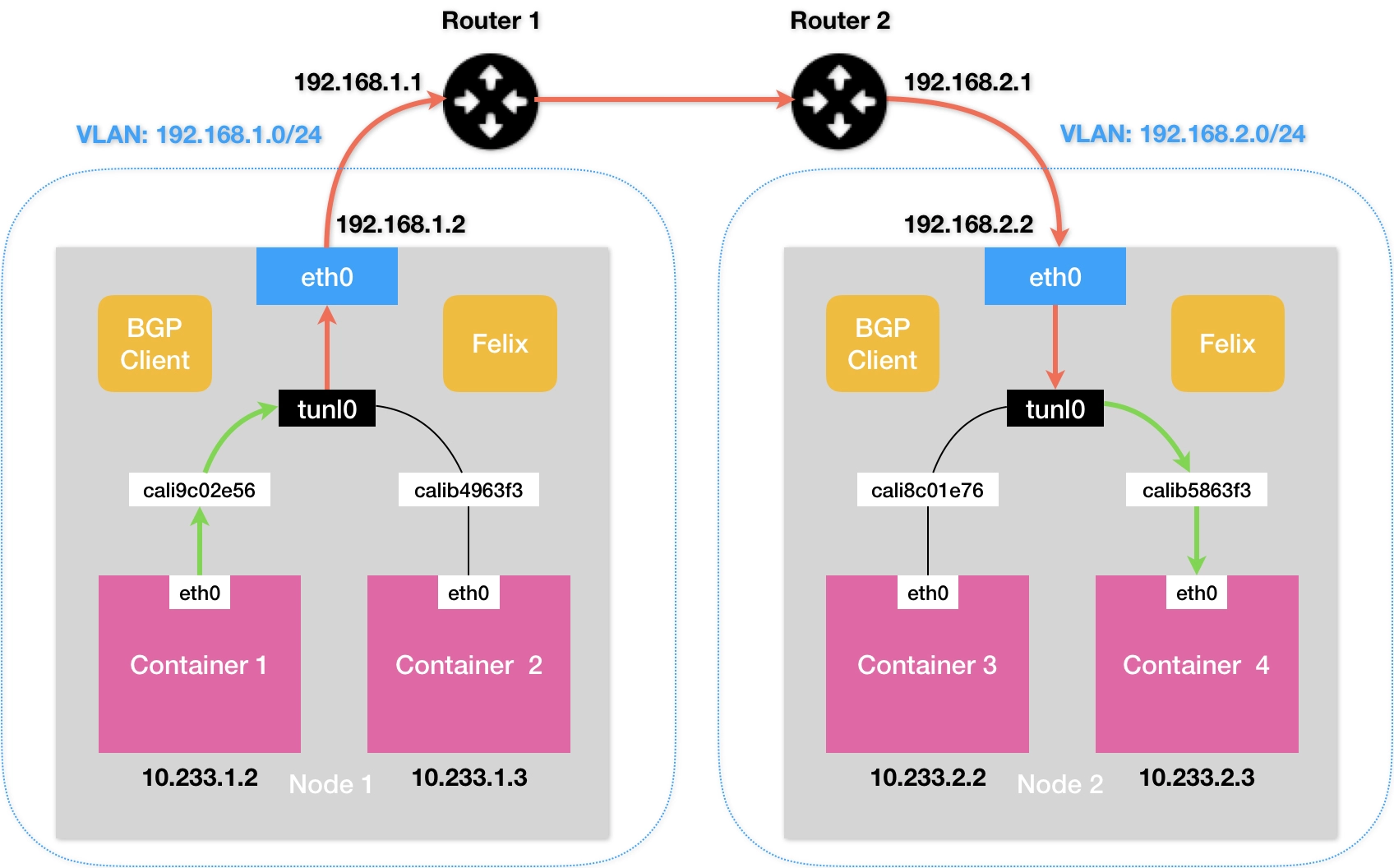

如果 Kubernetes 集群的节点不在同一个子网里,没法通过二层网络把 IP 包发送到下一跳地址,这种情景下就可以使用 IPIP 模式。

通过为 calico 进程设置环境变量 CALICO_IPV4POOL_IPIP=Always 打开。

我们理一下网络数据包如何从节点 1 上的 Pod A(IP 172.25.0.130)到达节点 2 上的 Pod B(IP 172.25.0.195)中:

-

首先看一下 Pod A 的网络栈:

$ nsenter -n -t ${PID} $ ip addr 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever 2: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000 link/ipip 0.0.0.0 brd 0.0.0.0 4: eth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1440 qdisc noqueue state UP group default link/ether 92:6b:a2:6b:c8:c4 brd ff:ff:ff:ff:ff:ff link-netnsid 0 inet 172.25.0.130/32 scope global eth0 valid_lft forever preferred_lft forever $ ip route default via 169.254.1.1 dev eth0 169.254.1.1 dev eth0 scope link在 Pod A 中访问 Pod B 中的服务,目的 IP 为 172.25.0.195,数据包根据默认路由来到容器的 eth0 网卡上,即 Veth Pair 在容器内的一端。

169.254.1.1这个 IP 地址写死在 Calico 项目的代码中,使用 Calico 网络插件的 Kubernetes 集群中所有容器的路由表都一样。 -

切换到宿主机视角:

$ ip route default via 10.211.55.1 dev eth0 proto dhcp metric 100 10.211.55.0/24 dev eth0 proto kernel scope link src 10.211.55.101 metric 100 172.17.0.0/16 dev docker0 proto kernel scope link src 172.17.0.1 blackhole 172.25.0.128/26 proto bird 172.25.0.129 dev calib17705e4170 scope link 172.25.0.130 dev calibfd619d4ca3 scope link 172.25.0.131 dev cali35a4ac9a8fc scope link 172.25.0.192/26 via 10.211.55.116 dev tunl0 proto bird onlink当目的 IP 为 172.25.0.195 的数据包来到 Veth Pair 在宿主机的一端,将命中最后一条路由

172.25.0.192/26 via 10.211.55.116 dev tunl0 proto bird onlink(毫无疑问是 Calico 网络插件创建出来的),下一跳 IP 地址是 10.211.55.116,也就是 Pod B 所在的节点 2,发送数据包的设备叫tunl0,这是一个 IP 隧道。

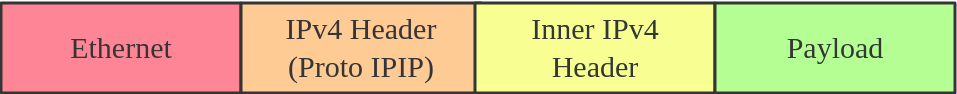

数据包到达 IP 隧道设备后,Linux 内核将它封装进一个宿主机网络的 IP 包中,并通过宿主机的 eth0 网卡发送出去。

-

IPIP 数据包到达节点 2 的 eth0 网卡后,内核将拆开 IPIP 封包,拿到原始的数据包。

$ ip route default via 10.211.55.1 dev eth0 proto dhcp metric 100 10.211.55.0/24 dev eth0 proto kernel scope link src 10.211.55.116 metric 100 172.17.0.0/16 dev docker0 proto kernel scope link src 172.17.0.1 172.25.0.128/26 via 10.211.55.101 dev tunl0 proto bird onlink blackhole 172.25.0.192/26 proto bird 172.25.0.193 dev calif94e20e3327 scope link 172.25.0.194 dev cali96e8aac71b5 scope link 172.25.0.195 dev calid74ab5d8f78 scope link目的 IP 为 172.25.0.195 数据包根据路由表前往名为 calid74ab5d8f78 的 Veth Pair 设备,并流向容器内的另一端。

以上参与全过程的网络设备和路由规则,都是由遵循 CNI 接口规范的 Calico 网络插件创建出来的。

同 Flannel,dockershim 在启动时会加载 CNI 配置文件 /etc/cni/net.d/10-calico.conflist:

{

"name": "k8s-pod-network",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "calico",

"log_level": "info",

"datastore_type": "kubernetes",

"nodename": "clipper1",

"mtu": 1440,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "/etc/cni/net.d/calico-kubeconfig"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

}

]

}

dockershim 将调用 /opt/cni/bin/ 路径下的 calico 和 portmap 插件来为容器配置预期的网络栈。

$ ll /opt/cni/bin/

total 96364

-rwxr-xr-x 1 root root 4559393 Jan 2 22:56 bandwidth

-rwxr-xr-x 1 root root 38133664 Jan 2 22:56 calico

-rwxr-xr-x 1 root root 37224224 Jan 2 22:56 calico-ipam

-rwxr-xr-x 1 root root 3069034 Jan 2 22:56 flannel

-rwxr-xr-x 1 root root 3957620 Jan 2 22:56 host-local

-rwxr-xr-x 1 root root 3650379 Jan 2 22:56 loopback

-rwxr-xr-x 1 root root 4327403 Jan 2 22:56 portmap

-rwxr-xr-x 1 root root 3736919 Jan 2 22:56 tuning

创建容器网络栈

与 Flannel 在创建容器时通过 bridge 插件代理配置相关网络命名空间中的网络栈不同,Calico 都是亲力亲为,自己实现了 ADD 和 DEL 命令 https://github.com/projectcalico/cni-plugin/blob/v3.11.2/pkg/plugin/plugin.go#L48-L385:

func cmdAdd(args *skel.CmdArgs) error {

// Unmarshal the network config, and perform validation

conf := types.NetConf{}

if err := json.Unmarshal(args.StdinData, &conf); err != nil {

return fmt.Errorf("failed to load netconf: %v", err)

}

// a lot of code here

// 3) Set up the veth

hostVethName, contVethMac, err := utils.DoNetworking(

args, conf, result, logger, "", utils.DefaultRoutes)

if err != nil {

// Cleanup IP allocation and return the error.

utils.ReleaseIPAllocation(logger, conf, args)

return err

}

// a lot of code here

}

calico 插件也是从上下文参数中获得网络命名空间等信息,并使用 Linux netlink 创建 Veth Pair https://github.com/projectcalico/cni-plugin/blob/v3.11.2/internal/pkg/utils/network_linux.go#L57-L283:

-

宿主机上 Veth Pair 的名字

calif94e20e3327由 cali 和容器 ID 拼接而成// Select the first 11 characters of the containerID for the host veth. hostVethName = "cali" + args.ContainerID[:Min(11, len(args.ContainerID))] contVethName := args.IfName var hasIPv4, hasIPv6 bool -

在容器网络命名空间内创建 Veth Pair 设备

veth := &netlink.Veth{ LinkAttrs: netlink.LinkAttrs{ Name: contVethName, Flags: net.FlagUp, MTU: conf.MTU, }, PeerName: hostVethName, } if err := netlink.LinkAdd(veth); err != nil { logger.Errorf("Error adding veth %+v: %s", veth, err) return err } hostVeth, err := netlink.LinkByName(hostVethName) if err != nil { err = fmt.Errorf("failed to lookup %q: %v", hostVethName, err) return err } if mac, err := net.ParseMAC("EE:EE:EE:EE:EE:EE"); err != nil { logger.Infof("failed to parse MAC Address: %v. Using kernel generated MAC.", err) } else { // Set the MAC address on the host side interface so the kernel does not // have to generate a persistent address which fails some times. if err = netlink.LinkSetHardwareAddr(hostVeth, mac); err != nil { logger.Warnf("failed to Set MAC of %q: %v. Using kernel generated MAC.", hostVethName, err) } } // Explicitly set the veth to UP state, because netlink doesn't always do that on all the platforms with net.FlagUp. // veth won't get a link local address unless it's set to UP state. if err = netlink.LinkSetUp(hostVeth); err != nil { return fmt.Errorf("failed to set %q up: %v", hostVethName, err) } -

配置容器内的路由表

// Do the per-IP version set-up. Add gateway routes etc. if hasIPv4 { // Add a connected route to a dummy next hop so that a default route can be set gw := net.IPv4(169, 254, 1, 1) // 169.254.1.1 gwNet := &net.IPNet{IP: gw, Mask: net.CIDRMask(32, 32)} err := netlink.RouteAdd( &netlink.Route{ LinkIndex: contVeth.Attrs().Index, Scope: netlink.SCOPE_LINK, Dst: gwNet, }, ) if err != nil { return fmt.Errorf("failed to add route inside the container: %v", err) } for _, r := range routes { if r.IP.To4() == nil { logger.WithField("route", r).Debug("Skipping non-IPv4 route") continue } logger.WithField("route", r).Debug("Adding IPv4 route") if err = ip.AddRoute(r, gw, contVeth); err != nil { return fmt.Errorf("failed to add IPv4 route for %v via %v: %v", r, gw, err) } } }169.254.1.1这个 IP 地址就来自于此。 -

为容器一端的 Veth Pair 配置 IP 地址

// Now add the IPs to the container side of the veth.

for _, addr := range result.IPs {

if err = netlink.AddrAdd(contVeth, &netlink.Addr{IPNet: &addr.Address}); err != nil {

return fmt.Errorf("failed to add IP addr to %q: %v", contVeth, err)

}

}

- 将 Veth Pair 的另一端移动至宿主机网络命名空间

// Now that the everything has been successfully set up in the container, move the "host" end of the

// veth into the host namespace.

if err = netlink.LinkSetNsFd(hostVeth, int(hostNS.Fd())); err != nil {

return fmt.Errorf("failed to move veth to host netns: %v", err)

}

- 在宿主机网络命名空间内添加路由规则,也就是我们所看到的

172.25.0.195 dev calid74ab5d8f78 scope link

// Now that the host side of the veth is moved, state set to UP, and configured with sysctls, we can add the routes to it in the host namespace.

err = SetupRoutes(hostVeth, result)

if err != nil {

return "", "", fmt.Errorf("error adding host side routes for interface: %s, error: %s", hostVeth.Attrs().Name, err)

}

创建 IPIP 隧道设备

名为 tunl0 的 IPIP 隧道设备是由 calico-node 进程创建的 https://github.com/projectcalico/node/blob/v3.11.2/pkg/startup/startup.go#L814-L874:

// createIPPool creates an IP pool using the specified CIDR. This

// method is a no-op if the pool already exists.

func createIPPool(ctx context.Context, client client.Interface, cidr *cnet.IPNet, poolName, ipipModeName, vxlanModeName string, isNATOutgoingEnabled bool, blockSize int, nodeSelector string) {

version := cidr.Version()

var ipipMode api.IPIPMode

var vxlanMode api.VXLANMode

// Parse the given IPIP mode.

switch strings.ToLower(ipipModeName) {

case "", "off", "never":

ipipMode = api.IPIPModeNever

case "crosssubnet", "cross-subnet":

ipipMode = api.IPIPModeCrossSubnet

case "always":

ipipMode = api.IPIPModeAlways

default:

log.Errorf("Unrecognized IPIP mode specified in CALICO_IPV4POOL_IPIP '%s'", ipipModeName)

terminate()

}

// a lot of code here

pool := &api.IPPool{

ObjectMeta: metav1.ObjectMeta{

Name: poolName,

},

Spec: api.IPPoolSpec{

CIDR: cidr.String(),

NATOutgoing: isNATOutgoingEnabled,

IPIPMode: ipipMode,

VXLANMode: vxlanMode,

BlockSize: blockSize,

NodeSelector: nodeSelector,

},

}

if _, err := client.IPPools().Create(ctx, pool, options.SetOptions{}); err != nil {

// a lot of code here

}

进而调用了 libcalico-go 项目。

总结

Calico IPIP 模式也会因为 IPIP 隧道封包解包损失大量的性能,与 VXLAN 类似。在规划 Kubernetes 集群时,建议将所有的节点都放在一个子网里,避免使用 IPIP 构建 Overlay 网络。